The structure of Whole-Play: a bottom-up approach

05.05.14

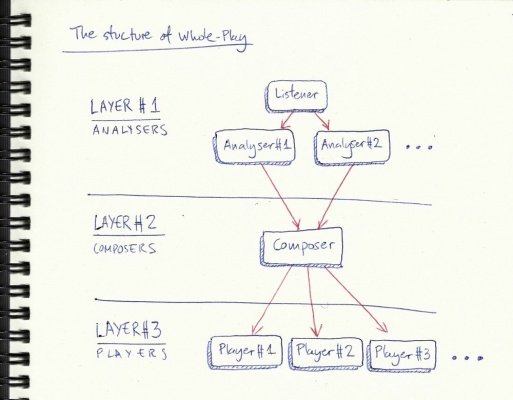

Whole-Play consists of many separate components interacting with one another, following certain protocols. These components are distributed into three layers, from top to bottom:

- The analysers layer. The components in this layer are responsible for listening to what's going on (i.e. what is the human improviser playing?), and analysing the collected data to extract information about musical elements such as tempo, rhythm, melodic and harmonic events, etc. Each component is responsible for analysing a particular musical aspect, and as it finds out interesting events, it sends messages down to the layer below.

- The composers layer. Here there's typically a single component, the Composer, which receives information from the analysers layer, and makes compositional decisions accordingly. The Composer will then send messages to the third layer.

- The players layer. Finally, the components here receive messages from the composers layer, and produce sound. Each component in this layer can produce sound in its own way (a software instrument, a synthesis patch, and at some point I hope by driving an external acoustic mechanical instrument).

Each component sends messages to the layer below (this is actually a simplification, the communication channels are a bit more sophisticated, but this is the essential mechanism), but each one runs independently, constantly processing the information it receives, and then generating its own messages.

On my first attempt at developing Whole-Play back in 2010 I quickly got stuck with a myriad of problems and hard to track bugs. This eventually led to the project going on hold (for way too long!), and the need to re-design the general structure. Now I'm following this three layer structure, and the first thing I did was to create a working framework that defined/tested the communication paths between the layers. This gives me confidence that the project won't get out of control as the complexity rises, especially the fact that each component works independently from the rest, and has very well defined protocols to communicate with the other components.

This also made me think that a better strategy to development would be to use a bottom-up approach, starting to work on layer three, and moving up from there. This has several advantages over a top-down approach:

- The players layer is the less sophisticated one. It just has to play sounds based on incoming messages from the composers layer. Ok, it's actually not that simple, but simpler than the other two, I'm pretty sure. :) This means I can start to hear some results in a relatively short time, and there's less danger of getting lost in technical issues and theoretical considerations.

- Having working players in place (and knowing what they're capable of) will help sharpen the focus when working on the upper layers.

- I might be able to use Whole-Play for side projects way before it gets finished, by making use of the components that are already developed. This is interesting since it can contribute to build and maintain enthusiasm (and it'll be fun too!). For example, right now I'm working on a Drummer component (one of the components in layer three), and although I still haven't done any work on the two upper layers, I can already use the Drummer as a sort of semi-intelligent drum machine, by manually creating a script that sends it the appropriate messages. Btw, still a lot to do on the Drummer, but there's already some cool stuff happening!

So yes, it's a long way to the top... But on the right path!

1 comment

Add a comment

PS: no links allowed in comment.

All comments

Mycle

Exiting stuff, Gonzalo! a complex sound organism. keep it up! M